Nvidia has announced the new GeForce RTX 4070 Ti, the card previously known as the RTX 4080 12GB. The price has been dropped from $899 to $799 and it will be available starting January 5.

The story of the RTX 4080 12GB is well-known at this point. After an unprecedented amount of pushback for the specifications, naming and pricing, the card was “unlaunched” and shelved for a future release. Now it has reappeared with a slightly different name and a slightly lower price.

Aside from that, this is still the same RTX 4080 12GB. You still get less than half the core count of the 4090, half the memory, and half the memory bus width, for now what is half the price. That might seem logical at first but the 4090 is a halo product that isn’t supposed to be good value and absolutely shouldn’t be the yardstick for configuring and pricing lower models in the series or we risk ending up with a $400 RTX 4050. As you can tell, not a lot has changed on that front with the 4070 Ti.

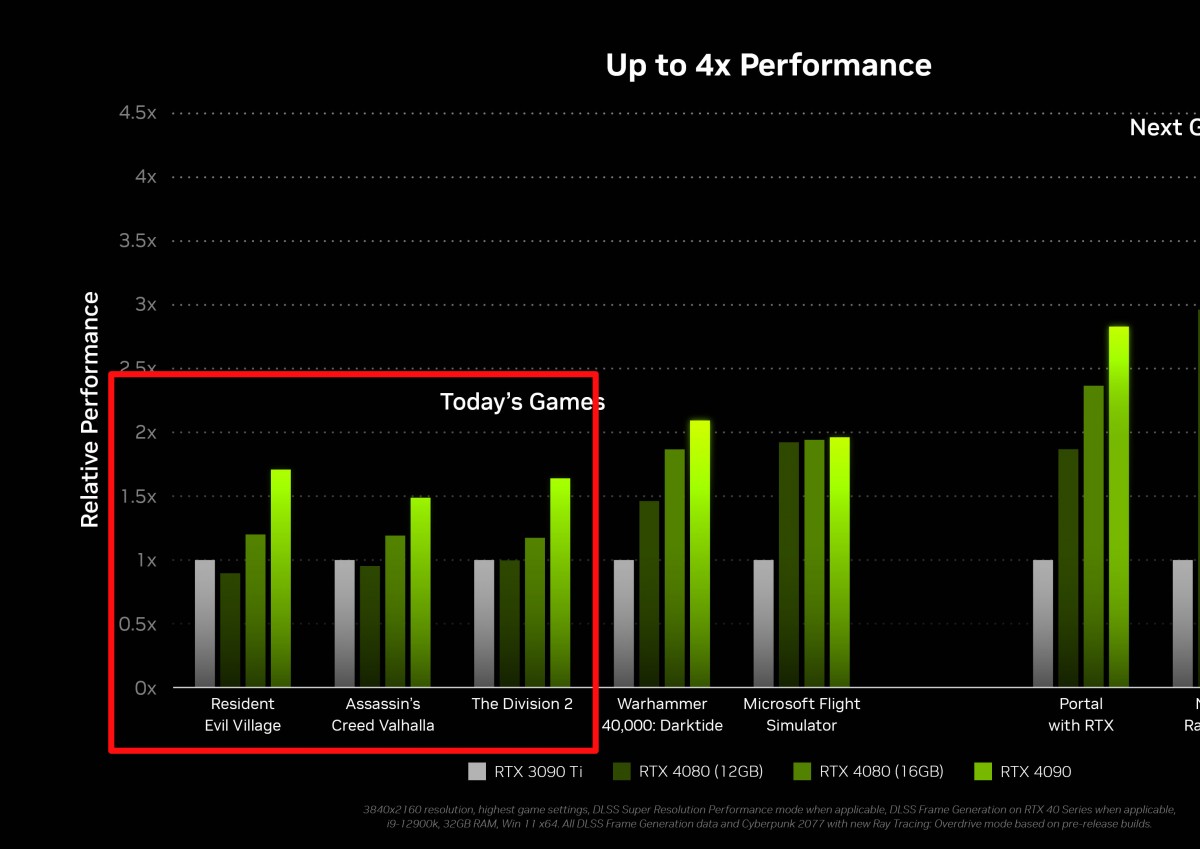

Nvidia claims the 4070 Ti is faster than the RTX 3090 Ti but only provides comparisons with DLSS3 enabled, a feature the 3090 Ti does not support. There is still one first party graph available from the original launch that shows performance in non DLSS titles and there the 4070 Ti seems to offer roughly 80-100% of the 3090 Ti’s performance. This puts it only slightly ahead of the RTX 3080 10GB, a card launched back in 2020 for $699.

Fortunately, while the rasterization performance hasn’t seem to have moved at all over two years, the 4070 Ti should benefit from other Ada Lovelace advancements, including improved ray tracing performance, better efficiency (Nvidia claims 226W power consumption under gaming), and improved media encoder with AV1 encoding support. DLSS3, while not ideal for cross generational comparisons, has also proved to be a useful feature and rapidly growing in adoption.

The RTX 4070 Ti will be available from ASUS, Colorful, Gainward, GALAX, GIGABYTE, INNO3D, KFA2, MSI, Palit, PNY and ZOTAC. There will be no Founders Edition model from Nvidia.